Why Churn Prediction ≠ Churn Reduction, and What To Do Instead

On the inherent shortcomings of churn prediction, and how customer retention can be improved with uplift modeling and dynamic point of cancellation offers.

1 Reducing customer churn is the best way to boost growth

The very unfortunate truth about customer churn is that it scales with your business. Meaning, the more your business grows, the more customers you'll have cancelling every month. In order to keep pace with your expanding churn numbers, you'll either need to find magical exponential growth, reduce your churn, or risk hitting an early growth ceiling.

Let's say, for example, our software-as-a-service (SaaS) product has an average monthly revenue of $50/customer. When we first start up, we're adding 100 customers a month, growing our MRR by $5,000 each month. In the second month, 7 of those customers cancel their subscriptions (a 7% churn rate - the industry average for B2C SaaS companies). Not a big deal - we're still netting 93 new customers! In the following month, another 7% churn - 13 of our 193 customers entering the month. But, we've added another 100 new customers, still netting 87 new subscriptions and a healthy $4,350 uptick in MRR.

So we continue on our merry way adding to our top line revenue each month. Then, seemingly out of nowhere, churn rears its ugly head. Midway through our second year, we've reached 1400 customers, and guess what? Thanks to our 7% churn rate, we're only breaking even with the 100 new customers that sign up each month. Our customer base (and revenue) is no longer growing. We have officially hit our growth ceiling - 1,400 customers and $71,000 MRR.

Unfortunately, this is far from a hypothetical example for me. Churn became a very real problem very quickly for Wavve.co. Luckily for all of us, a small reduction in churn can yield a massive boost in the growth ceiling. To continue with the numbers above, let's cut the churn rate from 7% to 5%. With this reduction, the growth ceiling suddenly jumps up from $71,000 MRR to $100,000 MRR - an increase of more than 40% and $350K ARR.

I would argue that reducing customer churn is the single best thing that most SaaS companies can be doing right now. As fun as growth is, keeping the customers you do have is far cheaper than acquiring new ones. With this in mind, let's take an in depth look at what can be done to reduce churn. First, we're going to be diving into churn prediction, where companies try to figure out which customers are going to cancel their subscriptions, and try to do something about it before that happens. This is often one of the first steps that a company will take once they realize their churn rate is becoming the limiting factor with their future growth.

2 How churn prediction works in practice

At its core, churn prediction attempts to put customers into two buckets:

- Customers who will churn

- Customers who will not churn.

Simple enough, right?

As far as the data science is concerend, churn prediction can be treated as a standard classification problem. We can look at a user’s behavior – how long they’ve been a customer, when they were last active, how much they’ve been paying per month, whether they’ve used your new feature, etc. – and train a machine learning model to predict how likely that user is to cancel their subscription in the next month.

There are a variety of machine learning models that can predict churn reasonably well. In practice, decision trees and logistic regression tend to be commonly used. I full-heartedly predict that we will see a shift towards recurrent neural net (RNN) models in the near future, as they provide a much more natural model for this problem - and I’ll be writing on that soon, but for now, let’s put aside which ML model is used, and turn our focus to how they are used.

2.1 What is done with churn predictions

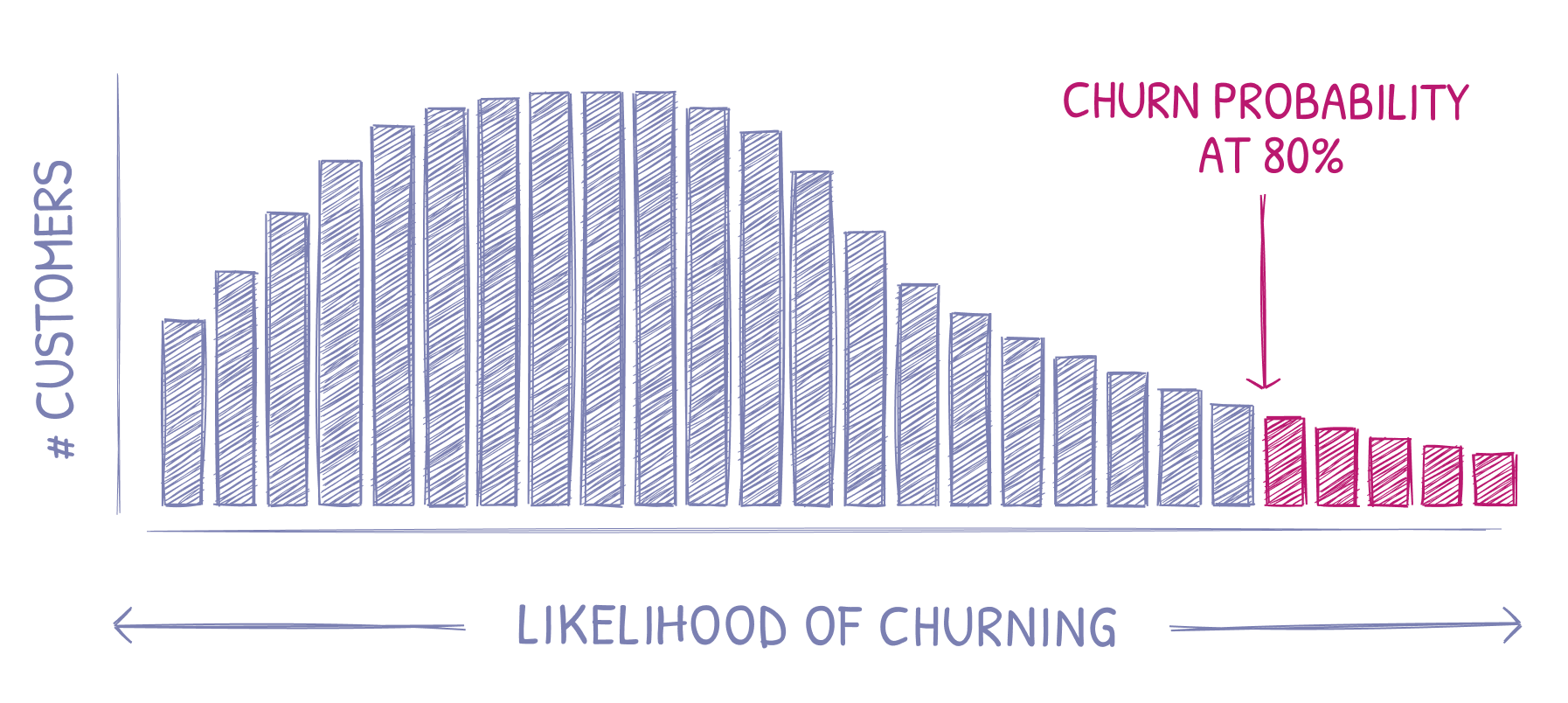

Once we’ve trained a model to predict how likely a customer is to churn in the next month - how can we use that information to improve our bottom line? In particular, how can we use it to prevent cancellations? Below, I've plotted a hypothetical distribution of our customers and their likelihood to churn in the next month following what a typical distribution may look like for a SaaS company. You'll note that I've also marked a cutoff at 80%, highlighting the customers that our model predicted had at least an 80% probability to churn in the coming month.

In developing our churn prediction model, the general idea is that we pick out the customers that are deemed “likely to churn” and hit them with a retention campaign, hoping to reinvigorate their love of the product, and, with a bit of luck on our side, prevent them from cancelling their subscription.

2.2 The retention campaign

A good retention campaign is product specific, but in general they tend to follow a lot of the same patterns. The objectives here are to (i) re-engage customers so they get more value out of your product and/or (ii) present a customer offer to get them to stick around. Common retention tactics can include:

- An email newsletter to bring your product to the front of customer’s minds

- A new product feature announcement

- Showcase how other customers are using your product

- Initiating “customer success” calls, or sending direct emails to customers you want to re-engage

- Reminding users to use the credits left in their account (ex. Audible)

- Offer a one-time discount for users (ex. Uber)

- Offer to put a customer’s subscription on pause (ex. Audible)

- Offer to switch (downgrade) a customer’s plan

Depending on your product, these campaigns should see varying (but typically pretty strong) engagement rates. At Wavve.co, we’ve had nearly 35% of customers accept an offer to temporarily pause their account.

So, we’re getting after the right customers using our churn prediction model, and the retention campaigns are sufficiently engaging – all is well, right?

3 Where churn prediction goes wrong

Let’s briefly back up and refocus on what our end goal is. We set out to reduce churn. In an attempt to do this, we designed a machine learning model which sifted through customer data and found the customers who were most likely to cancel their accounts. We then targeted these high risk customers with retention campaigns, hoping to prevent them from churning.

Unfortunately, it isn’t so simple. In this section, I’ll dig into what can go wrong with this pattern of churn prediction and customer re-engagement, including disturbing the “do-not-disturb” customers, sending discounts to people who wouldn’t have churned anyway, and the self-biasing nature of churn prediction once we start using its predictions to decide who to target with retention campaigns.

3.1 Churn prevention ≠ churn minimization

When we marked a customer to be targeted with a retention campaign, we took action. We reached out to these people ― to ask if they wanted to check out our new feature, or if they wanted more credits or a discount, etc. And some of these customers, who would have otherwise cancelled their subscription, decided to stick around. Great! But, at the same time, by knocking on these user's doors, we’ve also prompted some customers, who would not have otherwise churned, to cancel their subscriptions. Just a few weeks ago, Audible sent me a reminder that I had 2 credits to use. As luck would have it, that was just the reminder I needed to cancel my subscription! Clearly, this is exactly the opposite result Audible had hoped for with their churn prevention campaign.

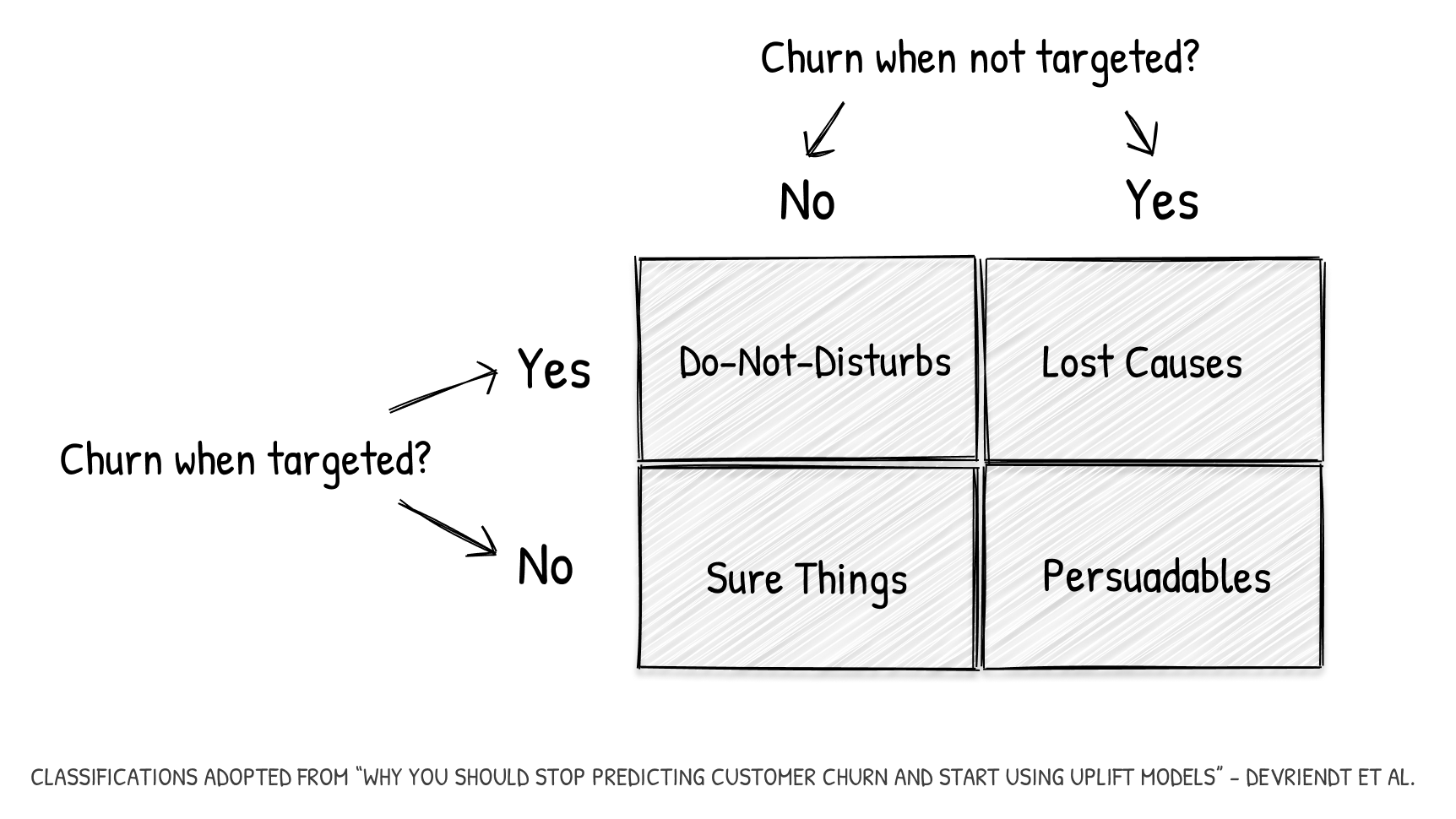

3.1.1 Four customers

To make things concrete, let’s categorize our customers into four groups based on their churn behavior with and without us taking action on them by using a retention campaign. We’ll get into the details of using different retention campaigns later, but for now let’s look at simply either "targeted" and "not targeted" to represent customers who were treated with a retention campaign or not, respectively.

Breaking these down:

- Do-not-disturbs. Customers who won’t churn unless we use a retention campaign. This is me cancelling my Audible subscription when they told me I had credits to use. Otherwise, I wouldn’t have thought about it and would have stayed a subscriber.

- Sure things. These are your adamant subscribers – the customers who will be with you next month whether or not we use a retention campaign on them. Love this group.

- Lost causes. This is just the opposite. The customers in this group are going to cancel their subscription no matter what we do.

- Persuadables. This is the group we want to go after with retention campaigns. These customers are going to churn if we don’t do anything but will stick around if we use a retention campaign on them.

In an ideal world, our churn prevention techniques would strictly target the fourth group, the persuadables. In reality, our predictive models are far from perfect and, regrettably, we are actually losing profits every time we target a customer who belongs to any of the other three groups:

- Do not disturbs. Worst case scenario – by intervening, we lose customers who would otherwise would have continued as subscribers.

- Sure things. When we allocate retention campaigns to this group, we are lowering profits by giving credits, discounts, and other costly perks to customers who would have kept paying us at full cost.

- Lost causes. For these customers, we are losing the cost of running the retention campaign. This can vary largely between companies. If we’re just sending an email, then not much lost. If we’re a B2B SaaS company doing manual outreach and customer calls, this can get quite costly.

3.2 Churn prediction is self-biasing

Ok, so we’ve seen how important it is to nail down specifically the segment of customers who are planning to churn unless we do something about it - the persuadables. Unfortunately, when it comes to segmenting customers into these four groups, we will never have complete information. We can, at best, narrow down each customer into one of two groups. For example, if we don’t use a retention campaign and a customer churns, we can never be sure if they would have cancelled had we targeted them with a campaign – we’ll never know if they were a lost cause or a persuadable.

As a result, if a machine learning model is continuously trained on live churn data, it will create a self-biasing feedback loop. The retention campaigns that we initiate against customers who we believe are high churn risks directly biases our results. We can, of course, create a control group where we withhold any retention efforts, but this naturally goes against our incentives to reduce churn as much as possible.

4 What we should do instead of predicting churn

Churn prediction can provide a step in the right direction, but it is hardly a solution in itself. Fundamentally, we don’t really care which customers are likely to churn nearly as much as whether or not we can do something about it, and what that something might be.

4.1 Adopt the churn prediction model to a customer uplift model

Recent research efforts have turned attention to customer uplift modeling in lieu of churn prediction. In short, customer uplift modeling changes the question from “will this customer churn” to “will a retention campaign prevent this customer from churning”. That is, how likely is it that this customer belongs to our persuadables group?

Concretely, uplift modeling looks at the predicted behavior after treatment (treatment, in our case, being a retention campaign). This is an important distinction in comparison to churn prediction. Instead of trying to generally predict if a customer will cancel their subscription, we are aiming to predict expected behavior after different treatments. Because uplift modeling takes into account which treatment is used and observes the results after that treatment has been applied, it eliminates the self-biasing aspect inherent in churn prediction.

Furthermore, we can not just answer whether or not a retention campaign will work, but we can start to answer which retention campaign would be most effective for our bottom line. For example, finding the smallest discount that we can offer to prevent a customer from churning.

Uplift modeling, as a whole, has applications outside of SaaS business. It is being integrated into personalized medicine to optimize treatment for each individual. It also has other applications inside the SaaS world in targeting customers with up-selling or cross-selling offers.

4.2 A more direct solution: point of cancellation offers

Customer uplift modeling is a very powerful tool. Alas, it takes a lot of time, developer resources, and data, to do well. A more practical, and nearly as effective, method is placing relevant offers at your customers' point of cancellation. That is, just as your customer is about to cancel, make them an offer they can’t refuse.

Since we’re presenting offers only when a customer is right on the verge of cancelling, we can rest assured we’re not bothering our “do not disturb” customers. We also know that we’re not handing out unnecessary discounts to customers who don’t need any extra incentive to stay subscribed (our second group, the “sure things”).

4.2.1 Making the right offer

The offers that resonate best with your customers is, in large part, business dependent. Some businesses have seasonality attached to them, where offering a “pause” instead of outright cancellation may be very effective. In other businesses, temporary discounts can prove very effective.

These offers can be optimized by dynamically generating them based on customer attributes such as:

- The monthly cost of the customer’s subscription

- How long the customer has been subscribed

- The billing interval of the subscription (monthly, annual, etc.)

Moreover, an exit survey can be used to directly ask a customer why they are cancelling. This very direct method is often the most effective. Once you know exactly why a customer is cancelling, it’s a lot easier to make them an offer that appeals to them.

In testing out which offers are most effective at retaining customers, we are essentially doing uplift modeling. We see how each offer is received by different customer profiles and can optimize our offers appropriately.

5 Implementing a churn solution with Churnkey

To recap, we’ve looked at the four types of customers (i) the do-not-disturbs, (ii) the lost causes, (iii) the sure things, and (iv) the persuadables. We discussed how we lose money on targeting any of the first three groups with retention campaigns and offers. And then we outlined how churn prediction fundamentally cannot find just the fourth group, the persuadables, due to the biasing intervention of the retention campaigns.

We followed this up with a discussion on changing the question from “who will churn” to “whose churn can we prevent, and how can we prevent it”. Two ways of taking this into practice were summarized: (i) implementing a full-blown customer uplift model and (ii) using point-of-cancellation surveys + dynamic offers.

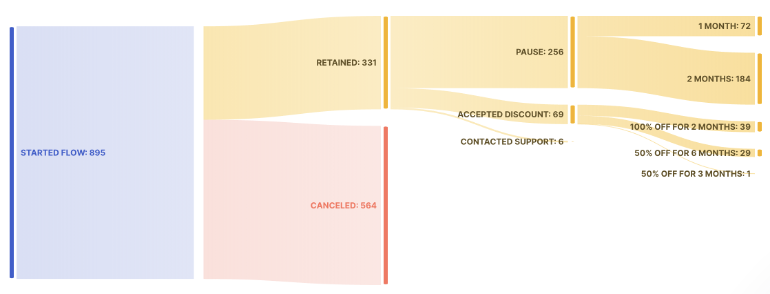

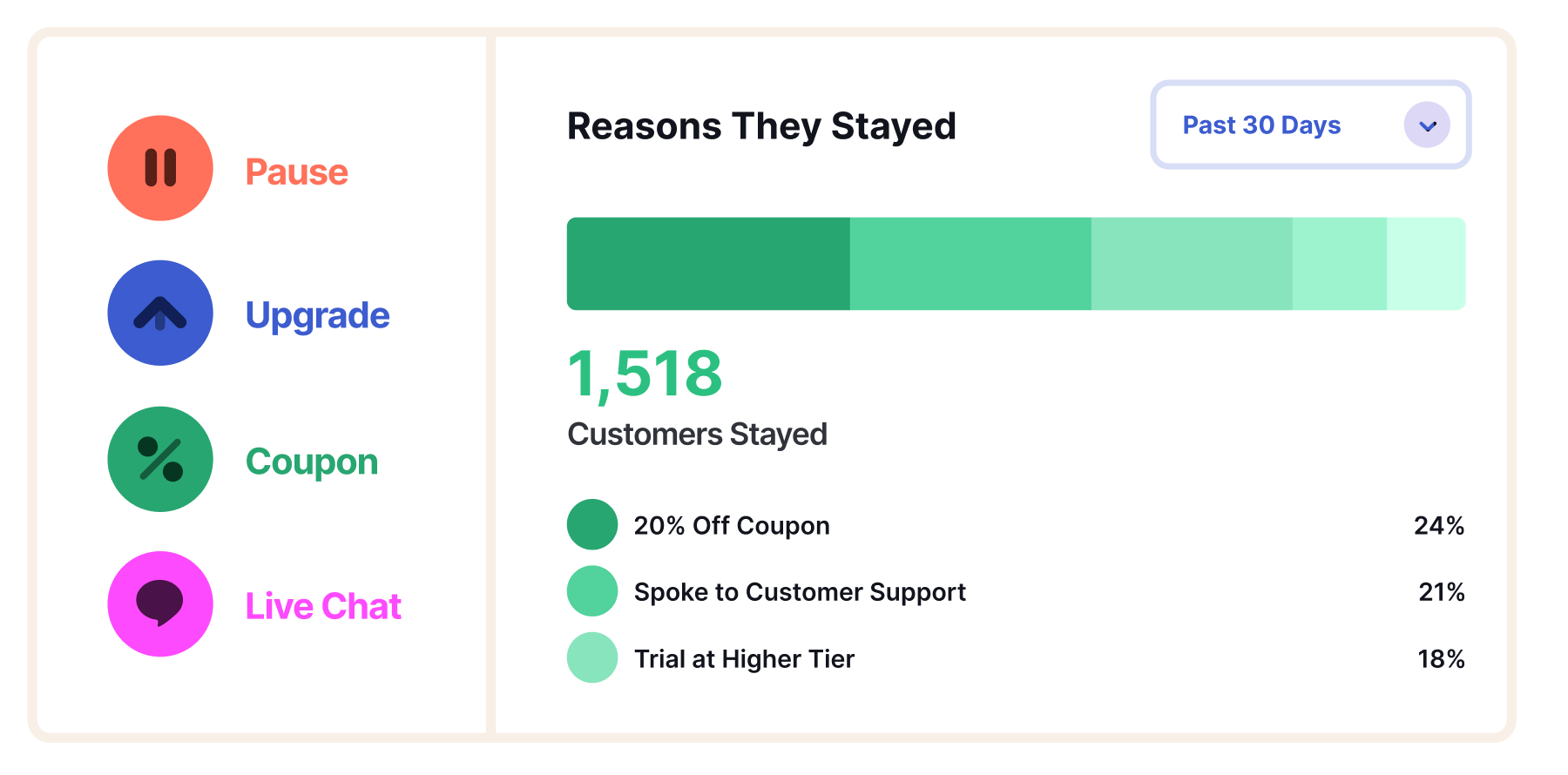

About a year and half ago, the team at Wavve.co (myself, Nick Fogle, Baird Hall) turned our full focus to reducing churn. Most effectively, this included adding a point of cancellation survey and dynamically generated offers for customers who were about to churn. The results have been way better than we imagined. We’ve retained 30% of customers who click “Cancel Subscription”. Roughly 70% of those customers decide to pause their account instead of cancelling outright, with the remainder continuing their subscription after accepting a temporary discount or after a live chat with our customer support (typically to answer some technical question).

For the last 6 months, the three of us have joined forces with the talented Scott Hurff to build out Churnkey, a drop-in solution to do the same for other SaaS companies. Below are the real results from the last 30 days of using Churnkey on Wavve: 895 customers clicked “Cancel Subscription”, and Churnkey has managed to prevent a remarkable 37% (331) from cancelling.

I hate to be salesy, but it’s hard to understate the impact of reducing churn on your bottom line, and we’d love nothing more than to help you continue to grow your business. Our results with early customers are overwhelmingly positive, and we're onboarding more companies each week.

Below, I’ll briefly outline the current feature set of Churnkey and leave it to you whether or not you want to get a head start on churn prevention 😉

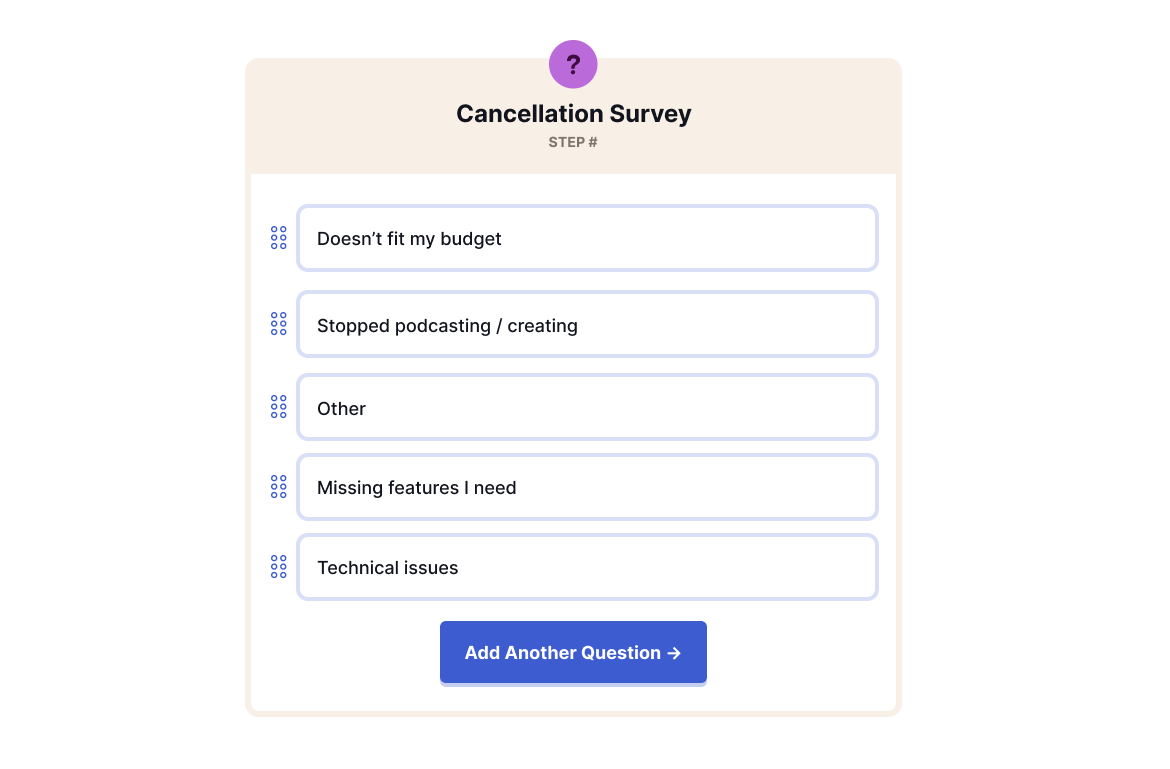

5.1 Custom cancellation survey

One of the best things you can do for your business is to keep a pulse on why people are cancelling their subscriptions. These are people that at one point or another decided to give you money. Now, something has changed. Knowing what that something is not only helps you figure out how you can improve your product, it can also help you figure out how you can get that potential churner to stick around with a custom offer.

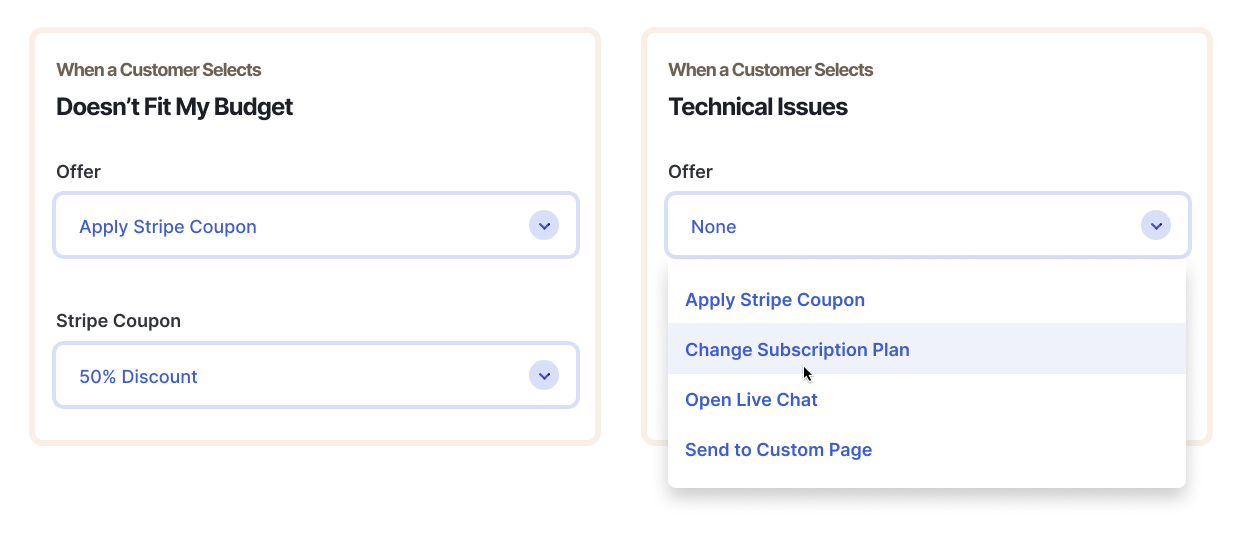

5.2 Dynamic offers at the point of cancellation

Based on a customer’s response to the cancellation survey, you can choose which offers you present. This means you can targeting customers with the offer most relevant to them (and the offer that’s most likely to keep them subscribed).

At this stage, relevance is essential, because your customer is signalling that their relationship with your product isn't what it used to be. Here is your chance to modify that relationship for the better.

5.3 Analytics to help you optimize over time

As more customers experience your cancellation flow, you can continually tweak and improve it to figure out what copy and what offers are most effective at preventing cancellations. Since Churnkey handles implementation, this means you can instantly make updates to your cancellation experience without needing to touch your own codebase.

In our experience, continuous optimization helped us improve customer retention from around 20-25% to rate consistently above 30%. This additional 40% retention improvement has had a huge effect on MRR and long-term revenue potential.

With Churnkey’s built-in dashboard, keeping your eye on what offers are converting—and which aren't—is easier than ever.

5.4 Direct integration with Stripe, Chargebee, Braintree, Paddle, and Zuora (with others on the way!)

As great as Stripe’s documentation is, it still takes significant dev time to roll out dynamic discounts, subscription pauses, and track their performance. We’ve got your back on this one. Simply connect your Stripe account to Churnkey and we can do the heavy lifting for you while using Stripe’s best practices.

And if you do require a bit more of a custom solution, you can hook into our event callbacks to easily handle your unique business logic.

This Is About Building a Bigger Business

We’re excited to be leveraging the latest churn prevention patterns to help you growth your SaaS businesses! Don’t hesitate to reach out and talk about how our product can work for you, especially if:

- Your current monthly customer churn rate is greater than 5%

- You have more than 100 active subscriptions (if you have more than one cancellation a day, Churnkey will almost certainly give your growth ceiling a significant bump)

- You use Stripe as a payment provider (support for more payment providers coming soon)